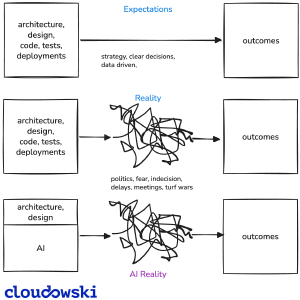

AI has become an indispensable tool for software development. I can’t imagine working without an LLM-powered assistant anymore. However, after months of intensive use, I’ve noticed something important: AI is great for writing applications, but it struggles with platform engineering code.

Here’s why platform engineering presents unique challenges for AI, and what we need to address to make it truly effective.

The Fundamental Difference

Software engineering has been around for decades. We have countless design patterns or practices like Domain Driven Design. We have well-established best practices documented in books, blogs, and courses.

We have massive amounts of training data from millions of repositories. This makes it relatively straightforward for AI to generate application code. Software developers have been learning to write code in various ways, but using a relatively small number of patterns.

Platform engineering is different. We have too few established design patterns. There are myriad ways to create environments, design CI/CD pipelines, manage deployments, and make systems flexible.

We have limited training data compared to application development. This fundamental difference creates several challenges when using AI for platform engineering.

Challenge 1: Lack of Established Patterns

Platform engineering lacks the rich ecosystem of design patterns that application development enjoys. While software engineering has well-documented patterns like MVC, Factory, or Observer, platform engineering is still evolving. AI models are trained on patterns, and without clear, established patterns, they struggle to generate consistent, high-quality platform code.

Every organization approaches platform engineering differently, making it harder for AI to learn from existing codebases. What works for one team’s GitOps setup might be completely wrong for another.

When I ask AI to create a Helm Chart, it often generates something generic that doesn’t align with my specific GitOps approach. I need to teach it our patterns, not just copy-paste from documentation.

Challenge 2: Context Is Everything (And Expensive)

Platform engineering requires deep understanding of your specific architecture, your processes and workflows, your tooling patterns and conventions, and your organizational constraints. AI needs extensive context to generate platform code that fits your environment, and large contexts are expensive. I burned through $20 in less than two weeks!

Without proper context, AI generates code that looks correct but doesn’t match your patterns. I’ve tried GitHub Copilot, Cursor, and Antigravity. The built-in knowledge isn’t enough.

They need to be fed with detailed architecture descriptions, process documentation, pattern examples specific to your organization, and tool usage conventions like which ArgoCD Application options to use or how to structure GitLab CI templates.

Context consumption is a real concern. You need to limit the number of files in context, use summaries instead of full files when possible, choose models with smaller contexts when appropriate, and leverage MCP (Model Context Protocol) for more efficient context management. The companies behind these models aren’t incentivized to help you pay less, so learning to manage context effectively becomes a critical skill.

Challenge 3: Teaching AI Your Specific Patterns

Platform engineering is highly contextual. What works for one organization might be wrong for another. AI needs to learn your specific patterns, not just general best practices.

Generic AI suggestions often don’t align with your GitOps approach. You need to teach AI about your Helm Chart structure, specify which ArgoCD Application and ApplicationSet options to use, define how to construct reusable GitLab CI templates, how to align with Kubernetes Policies, and many more rules to follow.

I maintain an AGENTS.md file with such rules and patterns. This is absolutely critical! Without this documentation, AI agents would generate code that doesn’t match my organization’s specific approach to platform engineering.

My workflow has evolved into a structured process that ensures quality while leveraging AI effectively:

- Request the change: I ask the agent to make a specific change, whether it’s creating a new Helm Chart, modifying an ArgoCD Application, or updating a GitLab CI template.

- Agent implementation: The agent thinks through the problem, considers the context I’ve provided, and implements the change based on the patterns in AGENTS.md.

- Review and verification: I carefully review what the agent created, checking for hallucinations, outdated knowledge, or opportunities to simplify the solution. This is where domain expertise is crucial - I need to know if the approach is correct and optimal.

- Request corrections: If something isn’t right, I provide feedback and ask for corrections. The agent learns from this feedback and adjusts its approach.

- Document new patterns: When I discover a better way to do something or identify a pattern that should be followed, I add it as a new rule for future reference.

- Update AGENTS.md: Finally, I ask the agent to update the AGENTS.md file with the new rule or pattern. I’m so lazy, I don’t even touch it manually anymore 😄.

This iterative process ensures that the agent gets better over time and that my platform engineering patterns are consistently applied across all changes.

The difference is striking. Cursor “absorbed” the rules and started working well almost from the beginning. Antigravity didn’t respect them and eventually gave up, asking for help (sic!).

This shows how crucial it is for agents to actually read and apply instructions. The quality of your instructions directly impacts the quality of AI’s output.

Challenge 4: MCP Ecosystem Is Still Immature

Model Context Protocol (MCP) is critical for AI agents to interact with real systems, but the ecosystem is still in its infancy. MCP servers are essential for agents to access tools and services, yet many are incomplete or unstable.

Some tools are locked behind expensive licenses - GitLab’s official MCP server requires higher-tier licenses, for example. Community alternatives often lack features or break unexpectedly.

I built my own MCP server for OCI registries because nothing suitable existed. The GitLab MCP server I was using had issues and wasn’t fixed.

I switched to another one, but it can’t perform merges. All my Git operations went through MCP, showing how critical these servers are.

The MCP ecosystem needs time to mature. We need stable, feature-complete servers for common tools. Until then, sometimes using commands directly works better than MCP, though MCP should be superior with proper elicitation features that allow agents to better understand available options before taking action.

Challenge 5: AI Is a Force Multiplier, Not a Replacement

AI can’t replace platform engineering knowledge. It’s a powerful assistant, but it requires supervision, verification, and someone who understands the system. AI often proposes overly complicated solutions when simpler ones exist.

It can make mistakes with syntax, outdated patterns, or wrong approaches. Without domain knowledge, you can’t verify if the agent’s work is correct. It’s like working with a junior engineer who needs constant teaching and correction.

Yes, you can sleep soundly - AI won’t be managing platforms independently anytime soon. It needs oversight and someone who understands the system.

But no, if you want to stay relevant - without understanding Kubernetes, Linux, containers, Helm, Git, and other platform fundamentals, you can’t verify what the agent does. AI is already replacing junior positions, similar to what happened in software development.

AI is an excellent force multiplier when you understand the platform yourself, can verify and correct AI’s work, know when to simplify AI’s overly complex suggestions, and use it to speed up repetitive tasks while focusing on architecture and decisions. Instead of hours spent on tedious tasks, you can focus on the bigger picture.

The Path Forward

The platform engineering community needs to develop and document more design patterns that AI can learn from. This might involve creating pattern libraries for common platform engineering scenarios, documenting GitOps patterns and best practices, and sharing reusable templates and conventions. The more patterns we establish, the better AI will become at platform engineering tasks.

Fine-tuning models on your specific patterns could be the next step. Instead of constantly providing context, a fine-tuned model would already understand your Helm Chart structure, ArgoCD Application patterns, GitLab CI template conventions, and GitOps workflows. This could be the breakthrough that makes AI truly effective for platform engineering.

Despite the challenges, start using AI for platform engineering today. Test different editors and models. Learn how to work with AI effectively.

This is the moment to get in and figure out how to make it work for your specific needs. The landscape is changing rapidly, and those who learn to work with AI now will have a significant advantage.

Conclusion

AI for platform engineering is awesome, but it’s not magic. The challenges are real: lack of established patterns compared to application development, context requirements that are both extensive and expensive, the need to teach AI your specific patterns and conventions, an immature MCP ecosystem that needs time to stabilize, and AI as a force multiplier rather than a replacement for platform engineering knowledge.

The key is understanding that platform engineering requires a different approach to AI than application development. We need better patterns, better context management, and better tools. But most importantly, we need platform engineers who understand their systems deeply enough to guide and verify AI’s work.

If you want to leverage AI for platform engineering, start now. Experiment, learn, and contribute to building the patterns and tools that will make AI truly effective for our field.

The future of platform engineering will be shaped by those who figure out how to make AI work effectively, not by those who wait for it to become perfect.

Comments