I decided to bet on AI agents and started writing them a long time ago. There are many reasons, but the main one is pure laziness. I’ve always wanted to automate things, and that’s why I became a DevOps engineer in the first place.

For me it’s a natural progression in my career that also feeds my innate curiosity, which led me from Linux systems through the cloud and containers to Kubernetes and Cloud Native technologies.

For now, I cannot reveal what my first agent will do, but I’ll share a few lessons from my perspective. As someone focused on Platform Engineering, my view on AI agents is skewed toward improving platforms for all kinds of apps (including the “vibe-coded” ones).

1. Why it’s not that hard (anymore)

I remember when I started digging into AI agents over a year ago. Back then the most popular frameworks were LangChain, LangGraph, and CrewAI. I even managed to write a simple agent, but it wasn’t something I enjoyed. It wasn’t easy either. These frameworks work, but they are too complex and have a steep learning curve.

There is one thing I do differently this time. I focus mostly on requirements and architecture, and let the code be written by AI. That’s right — I realized I won’t be as good in Python as LLMs, but I can use my thinking skills to instruct what I want and how I want it to work.

This realization changed my approach. I spend most of my time analyzing the features of my agent and how to implement them with an AI framework.

- How do I want to use sessions?

- What should the agent remember and store in its memory?

- What design patterns should I use to make it flexible and extensible?

- What tools should I use and how should I provide them?

- What parts should be sent to the LLM and what should be handled by deterministic code?

- How do I evaluate it?

These are the questions I try to answer. Testing is especially important when developing an agent.

2. Why testing is crucial

There’s a huge challenge with AI agents. When they use LLMs the outcome can be nondeterministic. That’s the nature of LLMs and it’s the challenge that needs to be addressed when you implement your own agent. It’s better to be tested well, because otherwise you’ll get disappointing results.

Things get even more complicated when you realize there are so many LLMs and they can interpret your prompts and the results from the tools very differently. That’s why testing is critical. At first I started testing as a part of the regular test suite — integration and end-to-end tests that included calls to LLMs.

Fortunately, there’s a better way. Many frameworks offer built-in evaluation methods. For example, the Google ADK framework has a decent evaluation. This makes the tests so much more robust, predictable and easier to maintain.

The AI agent is just another piece of software. Maybe a bit different, expected to perform amazing things with a bit of LLM “magic”, but it’s still software. Every good software deserves proper tests so don’t forget about this crucial part and use evaluation frameworks if possible.

3. Why freedom is actually bad for agents

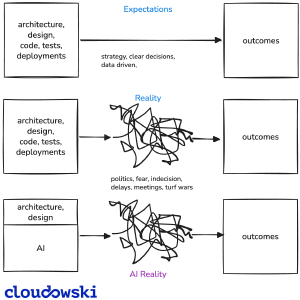

The media and less technical people imagine that AI is a panacea for all problems and is so powerful that it can solve every old problem much better. That’s only partially true, and there are a couple of caveats.

To create efficient AI agents you need to become a manager. That’s right — you need to start thinking about how to best instruct the agent to do the work. It requires shifting perspective from focusing purely on technology to focusing on providing clear goals and the right tools.

With AI agents there’s also one important ingredient — the freedom you give the agent. As I’ve learned, this is very critical to success and to costs.

My first agents had a lot of freedom. I was focused on writing proper prompts and letting them use the tools I thought were necessary for the tasks I had defined. I soon discovered it wasn’t working as I thought it would.

The tools I provided were too generic. I let the agent provide many of the necessary parameters, which often led to failed attempts to use the tools correctly. That generated too many calls to the LLM and too many errors before getting things right — and I wasted a lot of tokens.

I trusted LLMs too much and forgot about their nondeterministic nature. For the tasks I defined, I should have done things differently — and I did.

A much better approach is to limit the freedom of AI agents. I do it in two ways. First, the prompt: I define clear goals (what to do), restrictions (what NOT to do) and examples (here’s HOW to do it).

The second is to limit how tools are used. Instead of giving unlimited access to an API, I use Pydantic models to describe inputs and outputs in a deterministic way. I also write wrappers for functions used as tools to validate requests before they reach their destinations.

This approach is much faster, more reliable, and cheaper.

LLMs are smart, but I don’t rely on them too much. I let them connect the dots, but it’s better to control which dots they can use.

4. Spend your tokens wisely

Tokens are the new currency in the AI world. You can very quickly spend a huge amount of them by letting the LLM think extensively about the problem you want it to solve, especially if it’s defined in an ambiguous way. This reminds me of how cloud providers want you to use as many services as possible, even if they offer features you don’t really need.

That’s understandable when you want to solve a single problem and receive results without further interaction with the LLM. With AI agents it’s a different story. They are designed to run continuously and process requests in unknown quantities. Thus they need to be efficient and frugal.

I use a couple of techniques to address this challenge. First is the restricted-freedom approach and the careful use of tools I described above. Next is measuring the tokens used by the agent and improving prompts to be more specific. After all, you can’t control something you don’t measure.

Another technique to decrease costs is caching. Most models support it, with or without explicit configuration. Caching can save tokens, especially for agents that perform repeatable tasks with various inputs.

Tools generate a significant portion of token costs due to the data sent to LLMs. When I make API requests in tool calls, I try to limit the amount of data returned. If I can limit the scope of a query, less data is sent and fewer tokens are used. Often this is achieved by selecting only the fields significant for the agent.

I prefer less-cluttered data and use a more detailed view only when it’s really needed (for example, via a different tool or with proper prompt instructions).

5. Run own LLM or use services?

When I bought my Macbook M4 Pro a year ago I knew I wanted to run my own LLMs locally and that’s why I added 48 GB of RAM. I was excited when I first ran the Llama 3 model with Ollama and I was full of hope that it would allow me to use my own hardware for developing AI agents.

Not all models available for Ollama support tools required by agents, but that’s not the only issue — there are other limitations. I tested DeepSeek-R1, GLM-4.7, Qwen-3 and many more. They all failed. Why?

The models with open weights that you can run locally are simply not as capable as proprietary hosted LLMs. I was limited by 48 GB of memory, but still ran versions with over 30 billion parameters. What I needed wasn’t an omnipotent model, but one that would properly use the tools I provided — and I faced a huge dissapointment.

Maybe I should have written better prompts or tuned things more, but this experience discouraged me from relying on local models. They were also very slow. I can accept slow responses, but the results were disappointing.

So yes, for now I would stick with LLMs from large providers such as OpenAI, Google, Microsoft or Anthropic. It looks like they are all fighting for attention and building the new customer base for LLM services, and often they charge you less than they actually spend on running them.

According to my experiments they are better, faster, and more reliable. The value provided by AI agents is significant enough that the cost of using hosted LLMs is often justified.

Maybe this will change in the future, but for more complex agents I would stick with proprietary, smarter LLMs for now.

6. Which framework to choose?

I tried to write my first agents over a year ago. I used LangChain and LangGraph which were the most mature frameworks back then. It wasn’t a pleasant experience. These frameworks are very generic and have a steep learning curve.

Maybe if I had spent more time experimenting and had been more persistent I could have done more. But I wasn’t — I gave up and thought it was too complex and that I wasn’t good enough.

Then I came across n8n (there’s also an issue with its licensing for commercial products), but soon I realized it’s not something I’m interested in. It looked to me like an agentic workflow rather than a framework for creating autonomous agents.

I want my agents to run in the background. I don’t need a fancy UI. I may add one if needed, but the core value is in the way the agent handles tasks on its own.

At the time of writing, OpenClaw is the hottest AI bot on the planet. I decided to give it a try as it looked impressive.

I managed to run it for a few tasks and it is very versatile, mostly thanks to the growing number of skills it uses to interact with many services. It’s cool if you want a personal assistant. I guess that’s its biggest selling point — automate tedious tasks and impress your friends.

When you write an autonomous AI agent, or create a team of such agents, you need a proper framework. For my use cases — handling tasks from DevOps and Platform Engineering — I needed a well-documented, stable, and extensible framework in Python. My agents run mostly on Kubernetes and use many APIs to perform tasks.

Here are my top 3 frameworks at the moment:

Each of them has pros and cons. Most try to persuade you to use services provided by their creators or make it less frictionless to use other options (e.g., long-term memory).

Fortunately they all support the use of tools and MCP servers. This allows my agents to interact with the services I want to manage or use to perform complex tasks.

These are the most important features I need and use:

- Long term memory

- Support for MCP

- Support for most popular LLMs (including local run with Ollama)

- Excellent documentation (preferably with llms.txt)

- Support for evaluation

- Support for A2A

7. Software development practices FTW

I’m not a pro when it comes to software development. I’ve spent my time building skills in the Platform Engineering area. It allowed me to use my innate curiosity to analyze and understand how many systems work, but somehow I didn’t need to.

The emergence of AI and its fantastic capabilities to write code in almost any language has changed how I approach building agents. I need to build them like regular software, but focus on software development practices. LLMs will take care of the code; my job is to make sure that code is testable, extensible, and easy to understand for both me and future improvements made by AI.

So instead of polishing my programming skills, I turned my attention to architecture. My prompts include design patterns like factory, dependency injection, and single-responsibility principles.

As my codebase grows, these standards help me build mental models of the system. It’s my responsibility to understand component relationships, data flow, and ideas for enhancements and fixes. This is fundamental — you can skip low-level coding, but not the higher-level architectural layer.

What I also realized is how important data modeling is for AI agents. My agents benefit from well-defined structures for the data they operate on.

They are written in Python and I use Pydantic to provide strict field definitions. It works very well.

Thanks to validation rules, my agents receive well-defined input formats and clear expectations for outputs. That makes it easier to pass information between LLMs and tools.

Conclusion

AI agents are the next level for DevOps and Platform Architects like me. The capabilities of LLMs can be used to create autonomous agents that maintain and improve platforms for any kind of app.

This will also make it easier to enforce the practices we’ve been preaching about, such as zero-trust architecture, chaos engineering, and full observability. It’s never been easier to implement them, and now we have excellent tools to help.

I predict platforms that don’t embrace AI will be outpaced by those enhanced with AI tools, especially autonomous agents. That’s why I spend my time preparing and being part of this inevitable future.

This article was written by a human. AI was only used to correct typos and errors.

Comments